Deploy Model Endpoint for yolov8

YOLOv8 is the newest model in the YOLO algorithm series – the most well-known family of object detection and classification models in the Computer Vision (CV) field.

The tutorial will mainly focus on the following:

For the scope of this tutorial, we will use pre-built container (Yolov8) for the model endpoint but you may choose to create your own custom container by following this tutorial .

In most cases, the pre-built container would work for your use case. The advantage is - you won’t have to worry about building an API handler. API handler will be automatically created for you.

So let’s get started!

A guide on Model Endpoint creation & video processecing using yolo

Step 1: Create a Model Endpoint for YOLO on TIR

Go to TIR AI Platform

Choose a project

Go to Model Endpoints section

Click on Create Endpoint button on the top-right corner

Choose YOLOv8 model card in the Choose Framework section

Pick any suitable GPU or CPU plan of your choice. You can proceed with the default values for replicas, disk-size & endpoint details.

Add your environment variables, if any. Else, proceed further

Model Details: For now, we will skip the model details and continue with the default model weights.

If you wish to load your custom model weights (fine-tuned or not), select the appropriate model. (See Creating Model endpoint with custom model weights section below)

Complete the endpoint creation

Step 2: Generate your API TOKEN

The model endpoint API requires a valid auth token which you’ll need to perform further steps. So, let’s generate one.

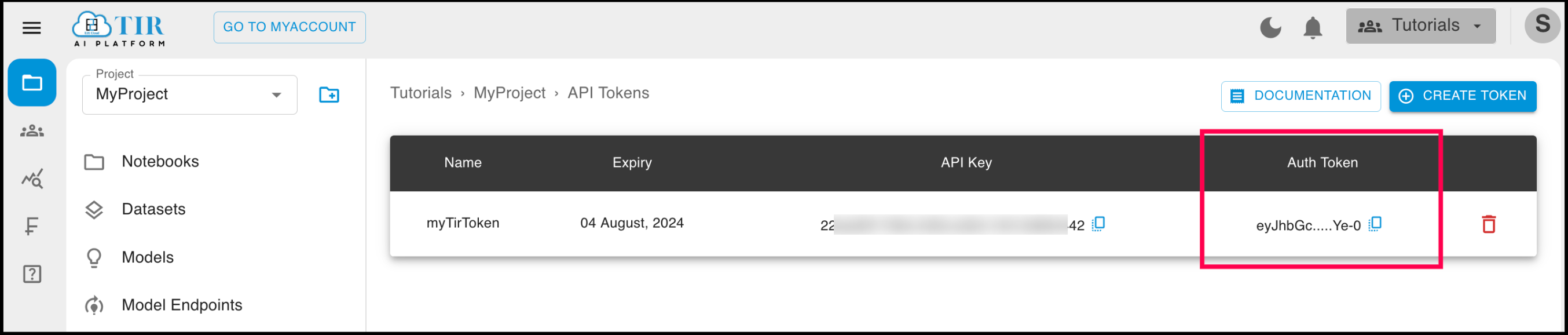

Go to API Tokens section under the project.

Create a new API Token by clicking on the Create Token button on the top right corner. You can also use an existing token, if already created.

Once created, you’ll be able to see the list of API Tokens containing the API Key and Auth Token. You will need this Auth Token in the next step.

Step 3: Generate a Video with Object Detection using Yolov8 Model

There are some steps involved to use the yolov8 model Endpoints

First you have to create a bucket to uplaod your input video in it please refer Bucket Creation

After creating the bucket upload your input video to that bucket please refer Object upload in Bucket

Once your model endpoint is Ready, visit the Sample API Request section of that model endpoint and copy the Python cod or curl request

You will get two python script or curl request one is for processecing the video and another is to track the video processecing

Launch a TIR Notebook with PyTorch or any appropriate Image with any basic machine plan. Once it is in Running state, launch it, and start a new notebook untitled.ipynb in the jupyter labs

Paste the Sample API Request code (for Python) in the notebook cell. Below is the sample code.

- For processing a video the python script is given below

import requests # enter your auth_token here auth_token = "your-auth-token" url = "https://jupyterlabs.e2enetworks.net/project/p-<project-id>/endpoint/is-<inference-id>/predict" payload = { "input": "$INPUT_VIDEO_FILE_NAME", # Path to the video file in the bucket you want to upload "bucket_name": "$BUCKET_NAME", # bucket name where input video file is stored and output will be stored "access_key": "$ACCESS_KEY", # Access key of bucket "secret_key": "$SECRET_KEY"} # Secret key of bucket headers = { 'Content-Type': 'application/json', 'Authorization': f'Bearer {auth_token}' } response = requests.post(url, json=payload, headers=headers) print(response.content) # This will provide a JOB_ID which you can use to track the status of video processing by using the request below

You have to mention the correct input path in the input section , bucket_name , access_key and secret_key in the payload Otherwise it will lead to error

You will get a job id by hitting the above url by using that url you can track the status of video processing

- For tracking the status of video processing using the job id the python script is given below

import requests # enter your auth_token here auth_token = "your_auth_token" url = "https://jupyterlabs.e2enetworks.net/project/p-<project-id>/endpoint/is-<inference-id>/status/$JOB_ID" headers = { 'Content-Type': 'application/json', 'Authorization': f'Bearer {auth_token}' } response = requests.get(url, headers=headers) print(response.content)

Please mention the correct job id in the url

Copy the Auth Token generated in Step-2 & use it in place of $AUTH_TOKEN in the Sample API Request

- Also mention appropriate input, bucket_name, access_key and secret_key in the above python script.

Note

Video output file should of .avi extenstion. As of now we are supporting only .avi files as output.

Execute the code & send request

You can view your video which has been downloaded in the bucket and the output path is yolov8/outputs/.

That’s it! Your Yolov8 model endpoint is up & ready for inference.

You can also try providing different images see the generated videos. model support only detection of objects

Creating Model endpoint with custom model weights

To create Inference against Yolov8 model with custom model weights, we will:

Download the yolomodel in pyttorch format like “yolo.pt”. No other format is supported

Upload the model to Model Bucket (EOS)

Create an inference endpoint (model endpoint) in TIR to serve API requests

Step 1.1: Define a model in TIR Dashboard

Before we proceed with downloading or fine-tuning (optional) the model weights, let us first define a model in TIR dashboard.

Go to TIR AI Platform

Choose a project

Go to Model section

Click on Create Model

Enter a model name of your choosing (e.g. stable-video-diffusion)

Select Model Type as Custom

Click on CREATE

You will now see details of EOS (E2E Object Storage) bucket created for this model.

EOS Provides a S3 compatible API to upload or download content. We will be using MinIO CLI in this tutorial.

Copy the Setup Host command from Setup Minio CLI tab to a notepad or leave it in the clipboard. We will soon use it to setup MinIO CLI

Note

In case you forget to copy the setup host command for MinIO CLI, don’t worry. You can always go back to model details and get it again.

Step 2: Upload the model to Model Bucket (EOS)

Uplaod the model to the bucket you can use minio client for this

# push the contents of the folder to EOS bucket.

# Go to TIR Dashboard >> Models >> Select your model >> Copy the cp command from **Setup MinIO CLI** tab.

# The copy command would look like this:

# mc cp -r <MODEL_NAME> stable-video-diffusion/stable-video-diffusion-854588

# here we replace <MODEL_NAME> with '*' to upload all contents of snapshots folder

mc cp -r * stable-video-diffusion/stable-video-diffusion-854588

Note

The model directory name may be a little different (we assume it is models–stabilityai–stable-video-diffusion-img2vid-xt). In case, this command does not work, list the directories in the below path to identify the model directory

$HOME/.cache/huggingface/hub

Step 3: Create an endpoint for our model

Head back to the section on A guide on Model Endpoint creation & for object detection of a video above and follow the steps to create the endpoint for your model.

While creating the endpoint, make sure you select the appropriate model in the model details sub-section, If your model is not in the root directory of the bucket, make sure to specify the path where the model is saved in the bucket.

You need to mention the correct path of model and make sure the extenstion of model shoult by pt