Persistent Volume using SFS

Deploy a resilient and fault-tolerant persistent volume utilizing SFS within a Kubernetes environment.

Method 1: Static Provisioning using PersistentVolume

Step 1: Create a Scalable File System (SFS)

-

In the left-hand navigation panel, click on Scalable File System (SFS) under the storage section.

-

On the SFS dashboard, click the Create SFS button to begin creating a new Scalable File System.

-

Fill in the required details:

- Enter a name for your SFS.

- Select the plan and VPC.

- Click Create SFS to confirm.

-

Once created, the new SFS will appear in the SFS list with its name, plan, and current status.

Step 2: Grant All Access

- In the SFS list, select the SFS you want to configure.

- From the Actions menu associated with that SFS, click Grant all access.

- A confirmation dialog will appear. Click Grant to allow full access to all resources — including Nodes, Load Balancers, Kubernetes, and DBaaS — within the same VPC to access this SFS.

Once granted, the process starts and completes within a few minutes. You can verify the configured Virtual Private Cloud (VPC) under the ACL tab of the SFS.

Step 3: Download the Kubeconfig File

- Navigate to your Kubernetes cluster in the MyAccount portal.

- In the cluster details section, locate and click the Download Kubeconfig option to save the

kubeconfig.yamlfile to your local machine. - Ensure

kubectlis installed on your system. To install it, follow the official guide. - Run the following command to verify connectivity and retrieve the list of nodes in the cluster:

kubectl get nodes

The output will list all nodes in the cluster along with their status and roles.

Step 4: Create a PersistentVolume

You can use the SFS volume through a PersistentVolumeClaim. First, create a PersistentVolume that references the SFS server. Then create a PersistentVolumeClaim bound to it. Finally, mount the PVC into a pod.

Create a file named pv.yaml with the following content. Replace 10.10.12.10 with your actual SFS server IP address:

cat << EOF > pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-pv

labels:

name: mynfs # name can be anything

spec:

storageClassName: manual # same storage class as pvc

capacity:

storage: 200Mi

accessModes:

- ReadWriteMany

nfs:

server: 10.10.12.10 # SFS server IP address

path: "/data"

EOF

Apply the PersistentVolume:

kubectl apply -f pv.yaml

Step 5: Create a PersistentVolumeClaim

Create a PersistentVolumeClaim that binds to the PersistentVolume created above. Create a file named pvc.yaml with the following content:

cat << EOF > pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-pvc

spec:

storageClassName: manual

accessModes:

- ReadWriteMany # must be the same as PersistentVolume

resources:

requests:

storage: 50Mi

EOF

Apply the PersistentVolumeClaim:

kubectl apply -f pvc.yaml

Step 6: Create a Deployment

Create a file named deployment.yaml with the following content:

cat << EOF > deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nfs-nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

volumes:

- name: nfs-test

persistentVolumeClaim:

claimName: nfs-pvc # same name of pvc that was created

containers:

- image: nginx

name: nginx

volumeMounts:

- name: nfs-test # name of volume should match claimName volume

mountPath: /usr/share/nginx/html # mount inside of container

EOF

Apply the Deployment:

kubectl apply -f deployment.yaml

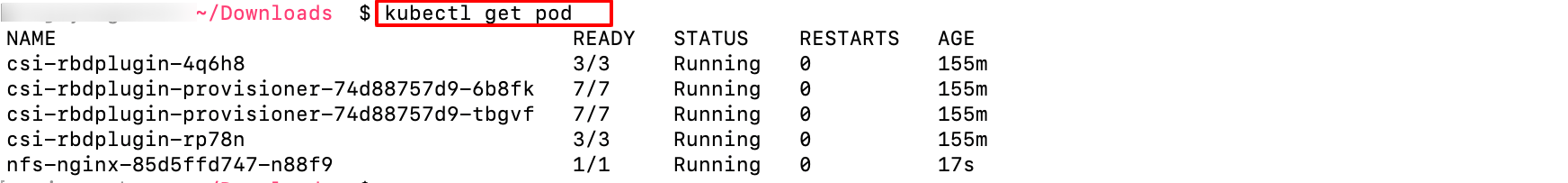

Step 7: Get Pod Details

Run the following command to retrieve information about the pods running in the cluster:

kubectl get pod

The output lists all running pods along with their status, restart count, and age.

Step 8: Execute a Command Inside the Pod

Run the following command to open an interactive shell inside a running container. Replace the pod name with the actual name from the previous step's output:

kubectl exec -it nfs-nginx-85d5ffd747-n88f9 -- /bin/bash

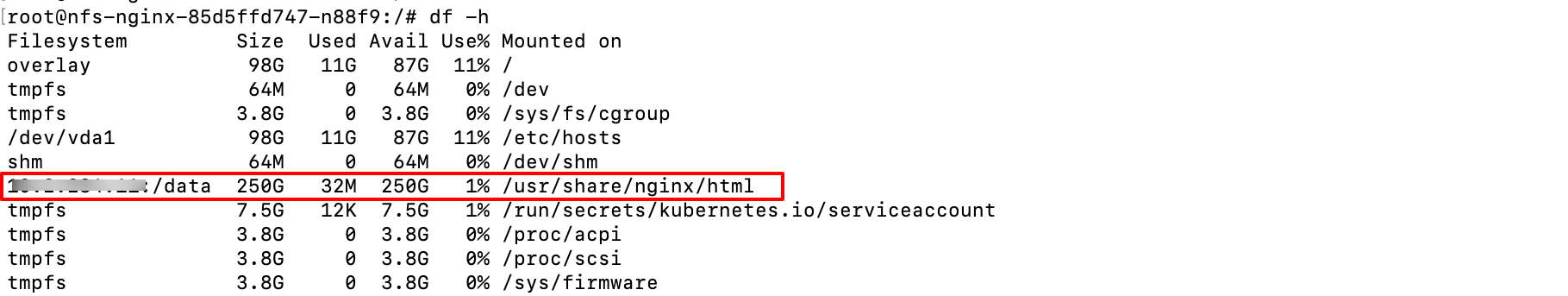

Step 9: Check Disk Space Usage

Run the following command inside the pod to display disk space usage statistics in a human-readable format:

df -h

This lists all mounted file systems, including total size, used space, available space, and usage percentage. Use this to monitor disk space and identify potential storage issues.

Method 2: Dynamic Provisioning using StorageClass

Steps 1, 2, and 3 are the same as in Method 1 above. Continue from Step 4 below.

Step 4: Install Helm and the NFS CSI Driver

Install Helm by following the official Helm quickstart guide.

Once Helm is installed, add the NFS CSI driver repository and install the driver:

helm repo add csi-driver-nfs https://raw.githubusercontent.com/kubernetes-csi/csi-driver-nfs/master/charts

helm install csi-driver-nfs csi-driver-nfs/csi-driver-nfs --namespace kube-system --version v4.9.0

Step 5: Create a StorageClass

Create a file named sc.yaml with the following content. Replace 10.10.12.10 with your actual SFS server IP address:

cat << EOF > sc.yaml

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: nfs-csi

provisioner: nfs.csi.k8s.io

parameters:

server: 10.10.12.10 # SFS server IP address

share: /data

reclaimPolicy: Delete

volumeBindingMode: Immediate

mountOptions:

- nfsvers=4.1

EOF

Apply the StorageClass:

kubectl apply -f sc.yaml

Step 6: Create a PersistentVolumeClaim

Create a file named pvc-sc.yaml with the following content:

cat << EOF > pvc-sc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-pvc-1

spec:

accessModes:

- ReadWriteOnce

storageClassName: nfs-csi # Reference to the NFS storage class

resources:

requests:

storage: 500Gi # Requested storage size

EOF

Apply the PersistentVolumeClaim:

kubectl apply -f pvc-sc.yaml

Step 7: Create a Deployment

Create a file named deployment.yaml with the following content:

cat << EOF > deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nfs-nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

volumes:

- name: nfs-test

persistentVolumeClaim:

claimName: nfs-pvc # same name of pvc that was created

containers:

- image: nginx

name: nginx

volumeMounts:

- name: nfs-test # name of volume should match claimName volume

mountPath: /usr/share/nginx/html # mount inside of container

EOF

Apply the Deployment:

kubectl apply -f deployment.yaml

Step 8: Get Pod Details

Run the following command to get details of the pods running in your Kubernetes cluster:

kubectl get pods

Step 9: Execute a Command Inside the Pod

Run the following command to open an interactive shell inside a running container. Replace the pod name with the actual name from the previous step's output:

kubectl exec -it nfs-nginx-85d5ffd747-n88f9 -- /bin/bash

Step 10: Check Disk Space Usage

Run the following command to display disk space usage statistics in a human-readable format:

df -h

Disable All Access

Disabling all access will deny access to all resources — including Nodes, Load Balancers, Kubernetes, and DBaaS — within the same VPC from accessing this SFS.

To disable all access from an SFS:

- In the SFS list, select the SFS you want to restrict.

- From the Actions menu, click Disable all access.

- A confirmation dialog will appear. Click Disable to confirm. Access will be denied from all services within the VPC.

Once confirmed, the process starts and completes within a few minutes. You can verify the updated access configuration under the ACL tab of the SFS.