NGINX Ingress Controller

How to set up an Nginx Ingress on E2E Kubernetes and demonstrate host-based routing protocol

Introduction

Kubernetes Ingress is an API object that provides routing rules to manage access to the services within a Kubernetes cluster. This typically uses HTTPS and HTTP protocols to facilitate the routing.

Ingress is the ideal choice for a production environment. Users can expose services within the Kubernetes cluster without having to create multiple load balancers or manually exposing services.

Moreover, K8s Ingress offers a single entry point for the cluster, allowing administrators to manage the applications easily and diagnose any routing issues. This decreases the attack surface of the cluster, automatically increasing the overall security.

Some use cases of Kubernetes Ingress include:

-Providing externally reachable URLs for services

-Load balancing traffic

-Offering name-based virtual hosting

-Terminating SSL (secure sockets layer) or TLS (transport layer security)

Prerequisites

Before you begin with this guide, you should have the following available to you:

Provision E2E Kubernetes cluster with any available versions this will serve as the platform for deploying your service workloads. Once the cluster is up and running, download the cluster configuration file (kubeconfig.yaml) from the E2E Kubernetes dashboard section and place it in the standard location: ~/.kube/config on your local machine.

Ensure that kubectl command-line tool Installed on your local machine and configured to connect to your cluster. You can read more about installing kubectl in the Official documentation.

Need to reserve and attach an LB IP through k8s LB IP section to expose a service externally via ingress.

Please find the detailed steps over here to provision LB IP.

A domain name and DNS A records which you can point to the E2E K8s LB IP used by the Ingress. If you are using E2E to manage your domain’s DNS records, consult the following article to for more information.

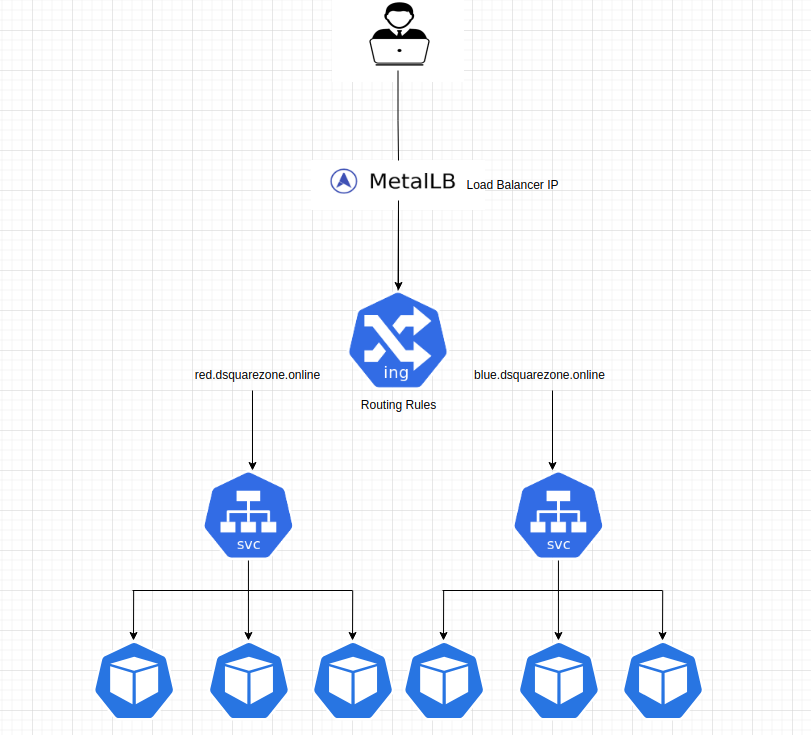

Architecture Reference :

Step 1 : Installing the Kubernetes Nginx Ingress Controller

Now install the Kubernetes-maintained Nginx Ingress Controller using Helm.

An ingress controller acts as a reverse proxy and load balancer. It implements a Kubernetes Ingress. The ingress controller adds a layer of abstraction to traffic routing, accepting traffic from outside the Kubernetes platform and load balancing it to Pods running inside the platform. It converts configurations from Ingress resources into routing rules that reverse proxies can recognize and implement.

Installing the Nginx Ingress Controller using Helm

Add the ingress-nginx Helm repository

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx

Update the Helm repository

helm repo update ingress-nginx

Install the Nginx Ingress Controller with specific settings

helm install --set controller.watchIngressWithoutClass=true --namespace ingress-nginx --create-namespace ingress-nginx ingress-nginx/ingress-nginx

List the Helm releases (deployments) installed in the ingress-nginx namespace

helm list -n ingress-nginx

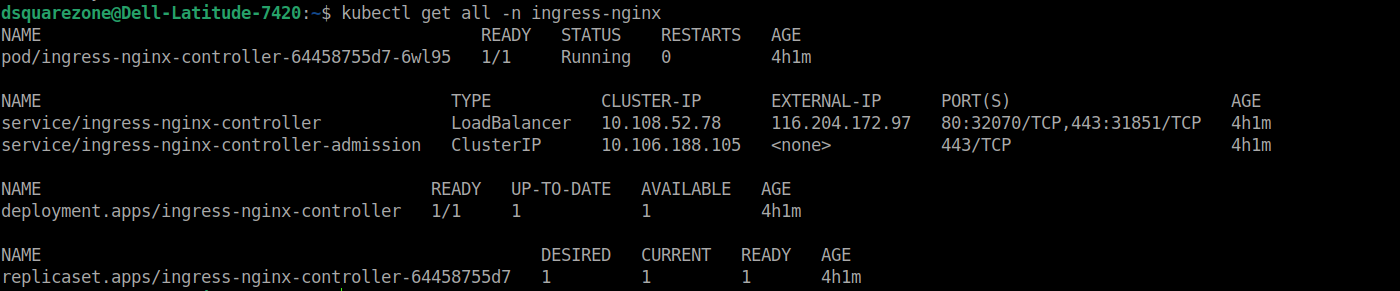

Once the nginx ingress installation has been done few components like nginx controller pod, nginx controller service is in created by assigning one of the attached LB IP.

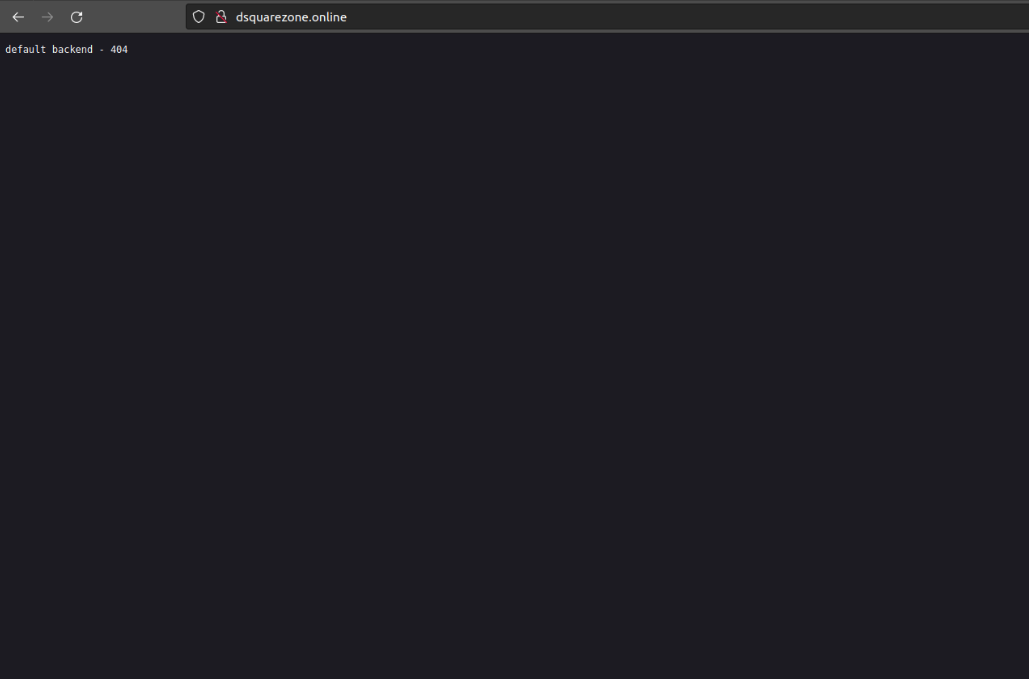

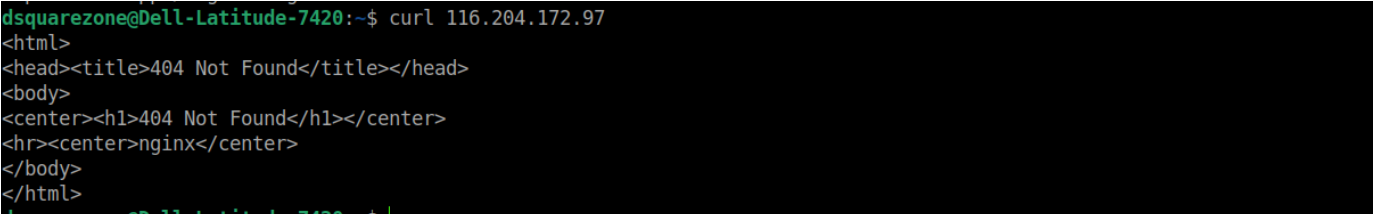

Here one of your available LB IP 116.204.172.97 has been assigned to ingress controller service. Let us try to access the service ip using curl.

!! We get 404 error because the ingress controller has no ingress routes configured yet.

To avoid this error response we can configure default backend service.

Example: When a request comes into the cluster that doesn’t match any specified rules in your Ingress resources (e.g., no matching host or path), the Ingress controller doesn’t know where to route that request. Instead of returning an error or leaving the request hanging, it sends the traffic to the default backend service.

Step 2 : Creating default backend and static frontend app deployments for host based routing.

Creating default backend deployment for handling error 404 not found error.

echo "---

apiVersion: apps/v1

kind: Deployment

metadata:

name: default-backend

labels:

app.kubernetes.io/name: default-backend

app.kubernetes.io/part-of: default-backend

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: default-backend

app.kubernetes.io/part-of: default-backend

template:

metadata:

labels:

app.kubernetes.io/name: default-backend

app.kubernetes.io/part-of: default-backend

spec:

containers:

- name: default-backend

image: k8s.gcr.io/defaultbackend-amd64:1.5

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: default-backend

labels:

app.kubernetes.io/name: default-backend

app.kubernetes.io/part-of: default-backend

spec:

ports:

- port: 80

protocol: TCP

targetPort: 8080

selector:

app.kubernetes.io/name: default-backend

app.kubernetes.io/part-of: default-backend

" | kubectl apply -f -

Creating frontend app deployment called nginxhello-blue

echo "---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginxhello-blue

labels:

app.kubernetes.io/name: nginxhello-blue

app.kubernetes.io/part-of: nginxhello-blue

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: nginxhello-blue

app.kubernetes.io/part-of: nginxhello-blue

template:

metadata:

labels:

app.kubernetes.io/name: nginxhello-blue

app.kubernetes.io/part-of: nginxhello-blue

spec:

containers:

- name: nginxhello-blue

image: suryaprakash116/blue-container

ports:

- containerPort: 80

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: COLOR

value: blue

---

apiVersion: v1

kind: Service

metadata:

name: nginxhello-blue

labels:

app.kubernetes.io/name: nginxhello-blue

app.kubernetes.io/part-of: nginxhello-blue

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app.kubernetes.io/name: nginxhello-blue

app.kubernetes.io/part-of: nginxhello-blue

" | kubectl apply -f -

Creating frontend app deployment called nginxhello-red

echo "---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginxhello-red

labels:

app.kubernetes.io/name: nginxhello-red

app.kubernetes.io/part-of: nginxhello-red

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: nginxhello-red

app.kubernetes.io/part-of: nginxhello-red

template:

metadata:

labels:

app.kubernetes.io/name: nginxhello-red

app.kubernetes.io/part-of: nginxhello-red

spec:

containers:

- name: nginxhello-red

image: suryaprakash116/blue-container

ports:

- containerPort: 80

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: COLOR

value: red

---

apiVersion: v1

kind: Service

metadata:

name: nginxhello-red

labels:

app.kubernetes.io/name: nginxhello-red

app.kubernetes.io/part-of: nginxhello-red

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app.kubernetes.io/name: nginxhello-red

app.kubernetes.io/part-of: nginxhello-red

" | kubectl apply -f -

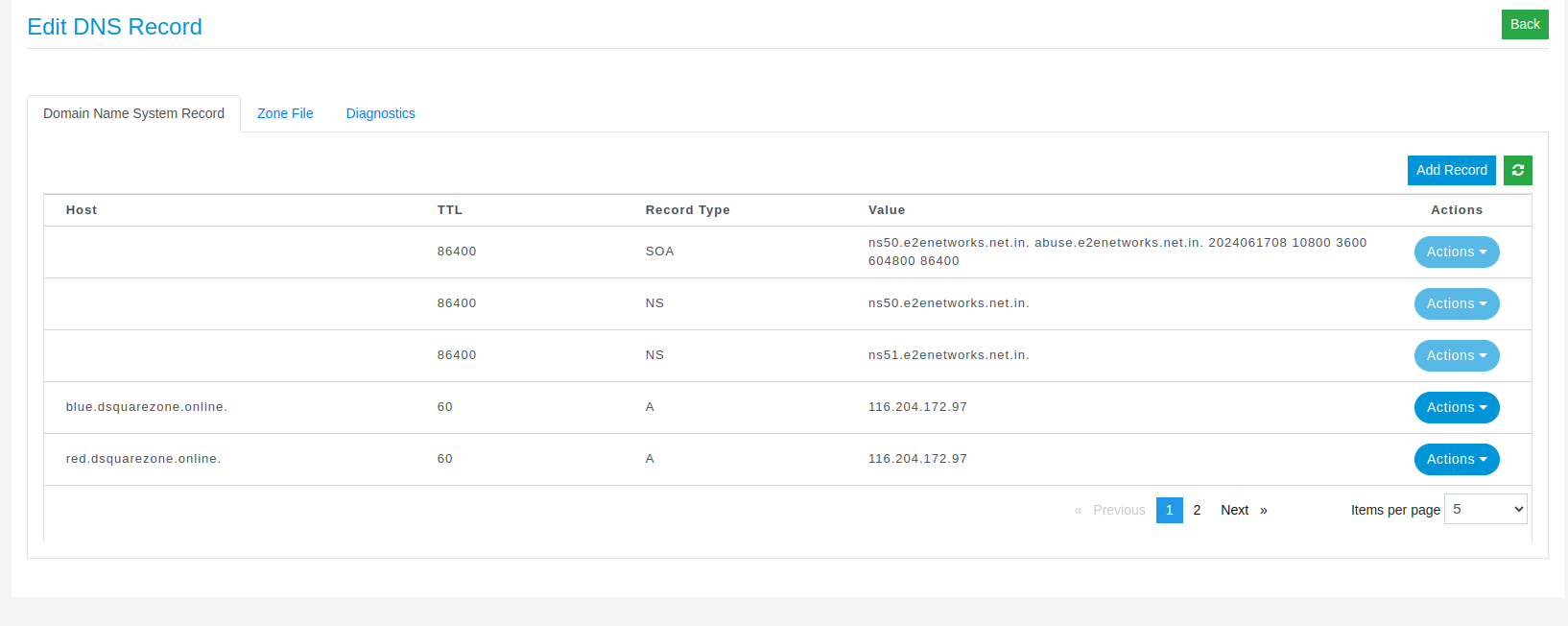

Step 3 : Configuring DNS for Host-Based Routing.

Now we have done the required application deployments. Before creating ingress rule to expose the service in host based path manner here we have to map the following ingress-nginx-controller external service IP your domain name like example.com using DNS Management Portal in E2E dashboard. Creating A record to perform host based routing for both backend service nginxhello-red and nginxhello-blue

Step 4: Creating ingress rule for host-based routing for both application backend services.

echo "apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: host-rule-ingress

spec:

defaultBackend:

service:

name: default-backend

port:

number: 80

rules:

- host: blue.dsquarezone.online

http:

paths:

- backend:

service:

name: nginxhello-blue

port:

number: 80

pathType: ImplementationSpecific

- host: red.dsquarezone.online

http:

paths:

- backend:

service:

name: nginxhello-red

port:

number: 80

pathType: ImplementationSpecific

" | kubectl apply -f -

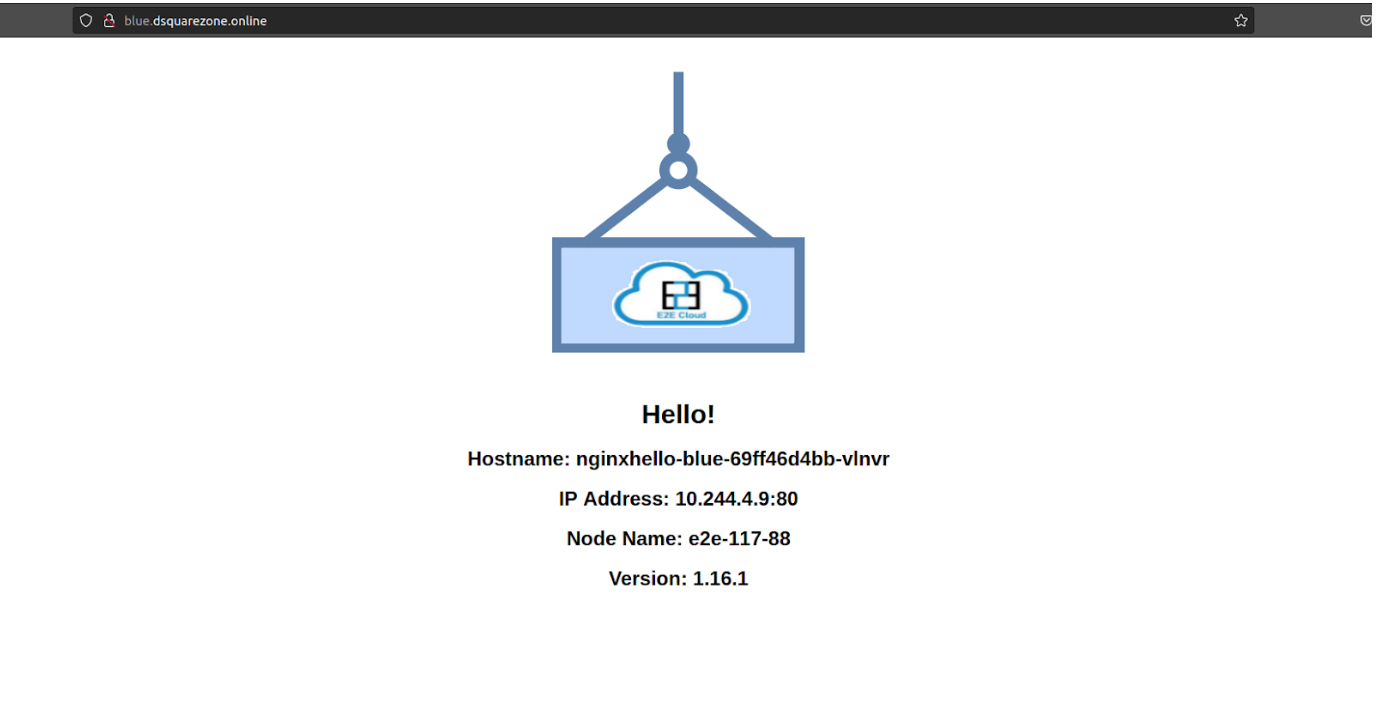

Now we can access our application named nginxhello-blue on this url http://blue.dsquarezone.online/ and nginxhello-red on this url http://red.dsquarezone.online/

Step 5: Create an ingress rule for the default backend service to handle invalid url requests.

echo "apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: single-default-backend-ingress

spec:

defaultBackend:

service:

name: default-backend

port:

number: 80" | kubectl apply -f -

Check that our ingress controller handles the invalid request to the default backend service using url.